How We Test: CPU Gaming Benchmarks

This a topic that's frequently raised when we do our CPU gaming benchmarks. Equally you lot know, we perform a ton of CPU and GPU benchmarks tests throughout the twelvemonth, a large portion of which are dedicated to gaming. The goal is to work out which CPU will offering you the almost bang for your buck at a given price indicate, now and hopefully in the futurity.

Earlier in the year we compared the evenly matched Core i5-8400 and Ryzen v 2600. Overall the R5 2600 was faster once fine-tuned, just ended upwardly costing more per frame making the 8400 the cheaper and more applied option for most gamers (side notation: Ryzen five is a improve buy today since it's dropped to $160).

For that matchup we compared the two CPUs in 36 games at iii resolutions. Because we want to apply the maximum in-game visual quality settings and apply as much load as possible, the GeForce GTX 1080 Ti was our graphics weapon of choice. This helps to minimize GPU bottlenecks that can hide potential weaknesses when analyzing CPU performance.

When testing new CPUs we have two principal goals in mind: #1 to work out how it performs right at present, and #2 how 'time to come-proof' is it. Will it all the same be serving you well in a year's time, for example?

The problem is quite a few readers seem to go confused about why nosotros're doing this, and I suspect without thinking it through fully, take to the comments section to bash the content for being misleading and unrealistic. It just happened again when we tested budget CPUs (Athlon 200GE vs. Pentium G5400 vs. Ryzen three 2200G) and we threw in an RTX 2080 Ti.

Note: This feature was originally published on 05/thirty/2018. We have fabricated pocket-sized revisions and bumped it every bit part of our #ThrowbackThursday initiative. It's just as relevant as the mean solar day we posted it.

This is something we've seen time and fourth dimension once more and we've addressed information technology on the comments straight. Oftentimes other readers have also come to the rescue to inform their peers why tests are done in a certain way. But as the CPU scene has become more competitive again, we thought we'd address this topic more broadly and hopefully explain a fiddling amend why information technology is nosotros test all CPUs with the nigh powerful gaming GPU available at the time.

As mentioned a moment ago, it all comes downwards to removing the GPU bottleneck. We don't desire the graphics card to be the functioning limiting component when measuring CPU performance and in that location are a number of reasons why this is important to exercise and I'll touch all of them in this commodity.

Let's outset by talking nigh why testing with a high-stop GPU isn't misleading and unrealistic...

Yes, it'due south true. It's unlikely anyone volition want to pair a GeForce RTX 2080 Ti with a sub $200 processor. However when we pour dozens and dozens of hours into benchmarking a fix of components, we aim to cover as many bases as we possibly tin to requite you the best possible ownership advice. Plainly we can only test with the games and hardware that are available correct at present and this makes it a little more difficult to predict how components similar the CPU volition behave in yet to be released games using more modern graphics cards, say a year or 2 downward the track.

Assuming y'all don't upgrade your CPU every time yous buy a new graphics card, information technology's important to determine how the CPU performs and compares with competing products, when non GPU limited. That'southward considering while you might pair your new Pentium G5400 processor with a modest GTX 1050 Ti today, in a year's time you might accept a graphics card packing twice as much processing power, and in 2-3 years who knows.

And then every bit an example, if we compared the Pentium G5400 to the Cadre i5-8400 with a GeForce GTX 1050 Ti, we would determine that in today's latest and greatest games the Core i5 provides no existent operation do good (see graph below). This means in a year or 2, when you upgrade to something offering performance equivalent to that of the GTX 1080, you're going to wonder why GPU utilization is just hovering around threescore% and you're not seeing anywhere near the performance you should exist.

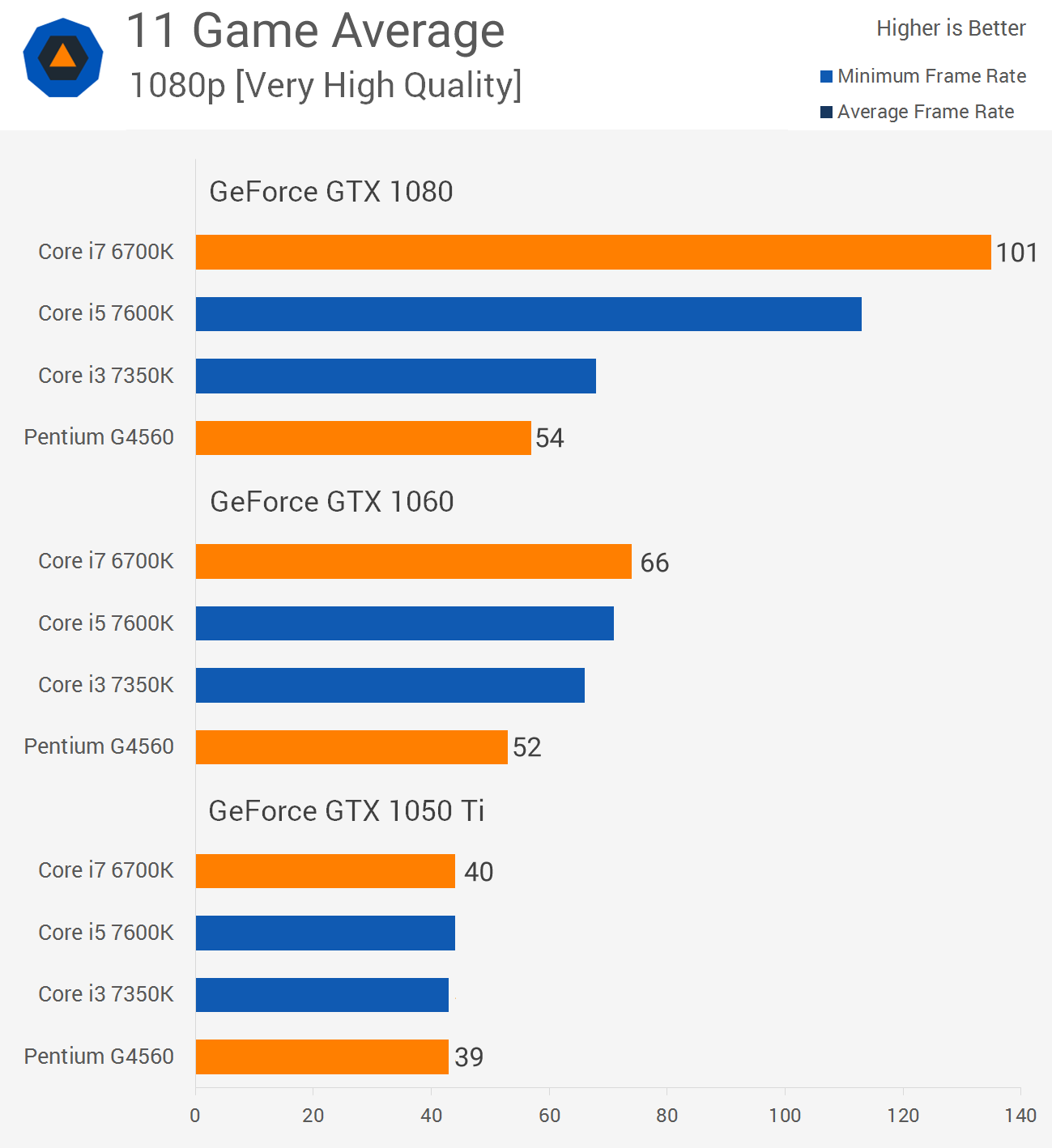

Here's another example nosotros can utilize: in early on 2022, during the Pentium G4560'due south release nosotros published a GPU scaling exam where we observed that a GTX 1050 Ti was no faster on the Core i7-6700K than with the Pentium processor.

However using a GTX 1060 the Core i7 was shown to exist 26% faster on average, meaning the G4560 had already created a system bottleneck but we could just know this because a higher-stop GPU was used for testing. With the GTX 1080 we see that the 6700K is almost 90% faster than the G4560, a GPU that by this fourth dimension adjacent year will be delivering mid-range performance at best, much like what we see when comparing the GTX 980 and GTX 1060, for instance.

Now, with this case you might say well the G4560 was simply $64, while the 6700K cost $340, of course the Core i7 was going to be miles faster. Nosotros don't disagree. Only in this xviii month quondam example we tin can encounter that the 6700K had significantly more headroom, something we wouldn't accept known had we tested with the 1050 Ti or even the 1060.

Yous could too fence that even today at an farthermost resolution like 4K in that location would be little to no deviation betwixt the G4560 and 6700K and that might be true for some titles, but won't be for others like Battleground ane multiplayer and information technology certainly won't be true in a year or two when games become even more than CPU enervating.

Additionally, don't fall into the trap of assuming anybody uses Ultra quality settings or targets just 60 fps. There are plenty of gamers using a mid-range GPU that opt for medium to loftier, and even low settings to push frame rates well past 100 fps, and these aren't just gamers with high refresh rate 144 Hz displays. Despite popular conventionalities there is a serious advantage to be had in fast paced shooters past going well beyond 60 fps on a 60 Hz display, but that's a discussion for another fourth dimension.

Getting back to the Kaby Lake dual-core for a moment, swapping out a $64 processor for something higher-end isn't a big bargain, which is why we gave the ultra affordable G4560 a rave review. But if nosotros're comparing more expensive processors such as the Core i5-7600K and Ryzen 5 1600X for case, information technology's very important to examination without GPU limitations...

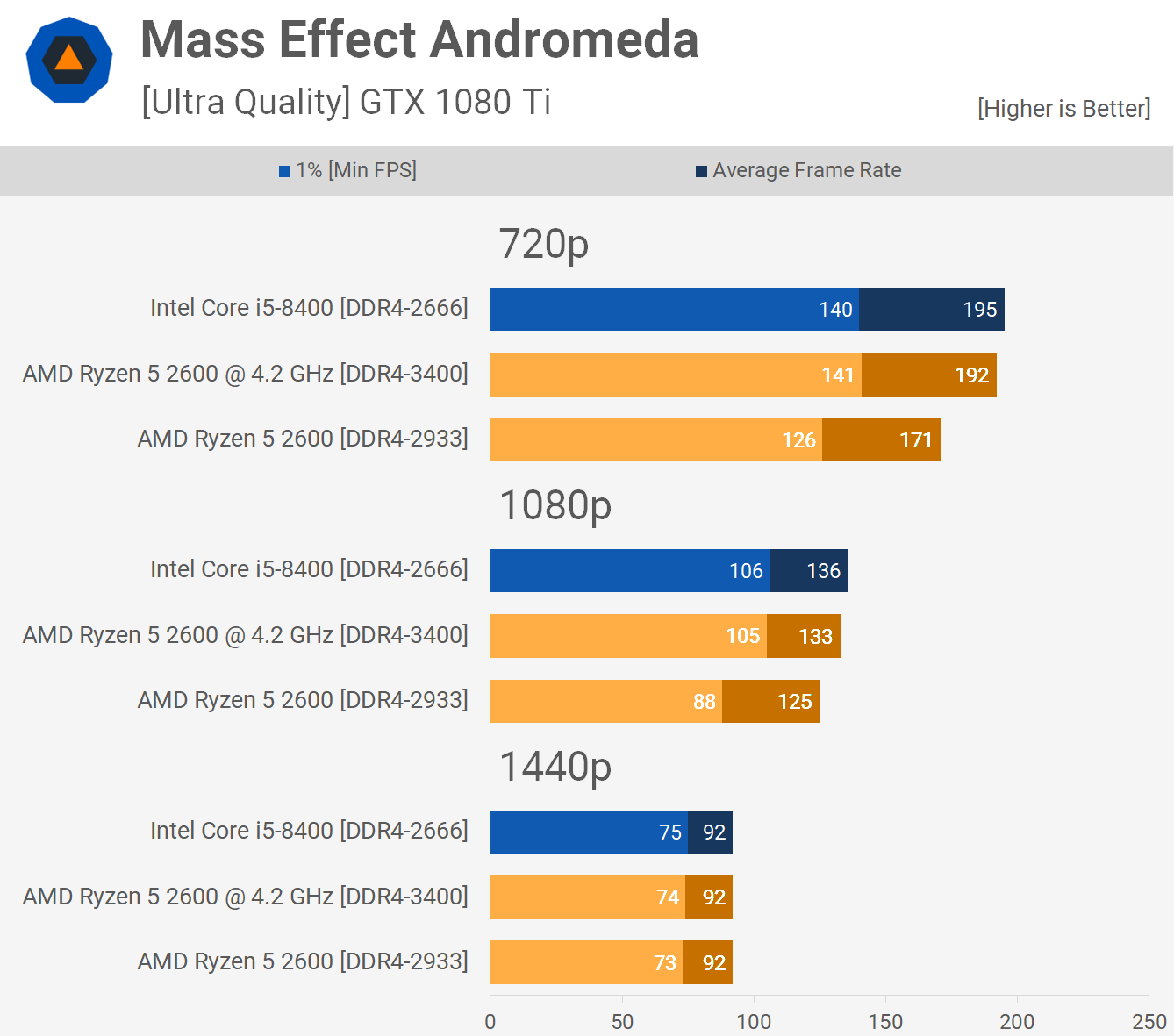

Back to our discussion of the Cadre i5-8400 vs. Ryzen five 2600 comparison featuring three tested resolutions, let'south take a quick expect at the Mass Effect Andromeda results. Those performance trends expect quite like to the previous graph, don't they? You could nigh rename 720p to GTX 1080, 1080p to GTX 1060 and 1440p to GTX 1050 Ti.

Since many suggested that these two sub-$200 CPUs should have been tested with a GPU packing an sub $300 MSRP, let's run into what that would accept looked like at our iii tested resolutions.

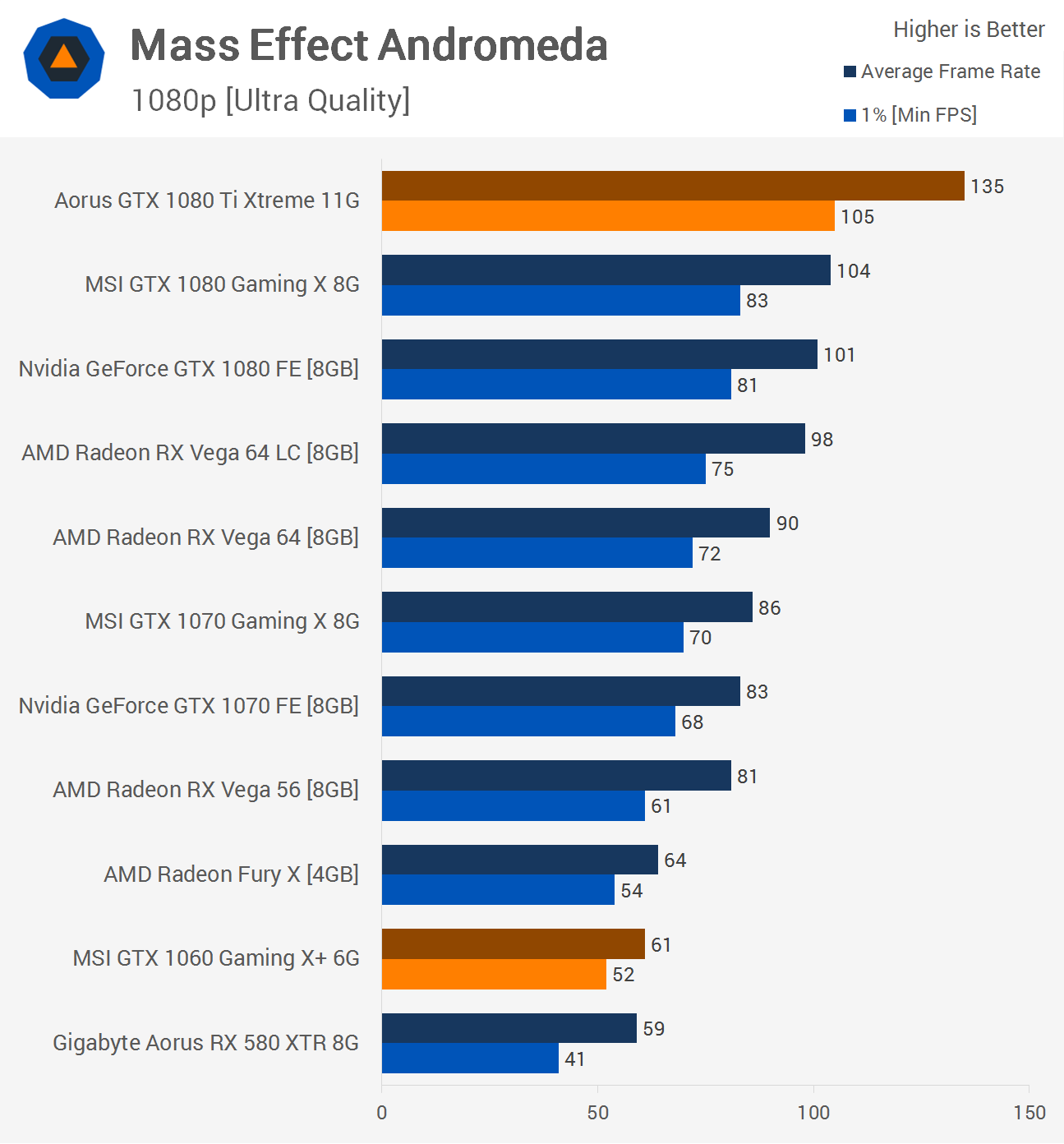

Now, we know the GTX 1060 has 64% fewer CUDA cores and in Mass Effect Andromeda that leads to around 55% fewer frames at 1080p and 1440p using a Core i7-7700K clocked at 5 GHz and we meet that in these two graphs from my 35 game Vega 56 vs. GTX 1070 Ti benchmark conducted last year. The GTX 1060 spat out 61 fps on boilerplate at 1080p and just 40fps at 1440p.

Graph beneath: GTX 1060 (cherry-red) vs GTX 1080 Ti (full bar)

So here's where the GTX 1060 is situated on our graph in relation to the GTX 1080 Ti. The first red line indicates the i% depression result and the 2d cherry-red line the average frame charge per unit. Even at 720p we are massively GPU leap. Had I but tested with the GTX 1060 or maybe even the 1070, all the results would accept shown united states of america that both CPUs can max those particular GPUs out in mod titles, even at extremely low resolutions.

In fact, you lot could throw the Core i3-8100 and Ryzen 3 2200G into the mix and the results would lead us to believe neither CPU is inferior to the Core i5-8400 when it comes to modernistic gaming. Of course, there will be the odd extremely CPU intensive title that shows a small-scale dip in functioning but the truthful difference would be masked by the weaker GPU performance.

I've seen some people advise reviewers test with extreme high-end GPUs in an effort to make the results entertaining, simply come up on, that one's only a scrap too dizzy to entertain. Equally I've said the intention is to make up one's mind which product will serve you lot all-time in the long run, not to keep y'all on the edge of your seat for an extreme benchmark battle to the expiry.

As for providing more than "real-globe" results by testing with a lower-finish GPU, I'd say unless we tested a range of GPUs at a range of resolutions and quality settings, y'all're not going to see the kind of real-world results many claim to deliver. Given the enormous and unrealistic undertaking that kind of testing would become for any more than than a few select games, the best option is to exam with a high-end GPU. And if you lot tin can practise so at 2 or 3 resolutions, like we ofttimes practise, this volition mimic GPU scaling operation.

Don't become me wrong, it'south non a dumb proposition to examination with lower end graphics cards, information technology's just a different kind of examination (...) merely given GPU-limited testing tells you lot niggling to nothing, that's something we attempt to avert.

Ultimately I feel like those suggesting this testing methodology are doing so with a narrower viewpoint. Playing Mass Effect Andromeda with a GTX 1060 simply using medium settings will encounter the same kind of frame rates you'll get with the GTX 1080 Ti using ultra quality settings. So don't for a 2d make the mistake of assuming everyone games under the same weather as you.

Gamers have broad and varying range of requirements, so we practise our best to use a method that covers equally many bases as possible. For gaming CPU comparisons nosotros desire to determine in a large volume of games which production offers the best functioning overall as this is likely going to exist the better performer in a few year'due south fourth dimension. Given GPU-limited testing tells you little to nothing, that's something we try to avoid.

Shopping Shortcuts:

- AMD Ryzen 5 2600X on Amazon, Newegg

- AMD Ryzen 5 2600 on Amazon, Newegg

- Intel Core i5-8400 on Amazon, Newegg

- GeForce GTX 1050 Ti on Amazon

- GeForce GTX 1060 3GB on Amazon

- GeForce GTX 1070 Ti on Amazon

Source: https://www.techspot.com/article/1637-how-we-test-cpu-gaming-benchmarks/

Posted by: nelsontherme98.blogspot.com

0 Response to "How We Test: CPU Gaming Benchmarks"

Post a Comment